Reflecting On The Reflecting Pool.

Posted on | May 29, 2026 | 4 Comments

Jenny and Forrest (July 6, 1994) – Forrest Gump.

Mike Magee

The historic pool is, of course, not a swimming pool, although at 18 inches deep at the edges and 30 inches deep in the center, the National Archives does have a 1926 photo of children safely wading in it. Seven decades later, a fictional war-torn, Viet Nam vet, Forrest Gump, was reunited with his childhood first love, Jenny Curran, in a joyful embrace in these very same sacred waters.

When 57 year old architect Henry Bacon first encountered the site, he had good reason to be discouraged. In 1915 it was a marshy mess called Kidwell flats. And yet construction at West Potomac Park of the Lincoln Memorial had begun, and on May 30, 1922 that historic monument was dedicated. It sat on 122 concrete pillars driven up to 65 feet into the mud in a search for solid bedrock.

The actual excavation for the accompanying Reflecting Pool began on March 25, 1920 and was completed in 1923. At 2030 feet, Bacon’s creation has been considered a work of art that was “designed to vanish.” Nonetheless engineers today agree, its construction was botched. The asphalt and tile base was set on “soft, dredged riverbed with the consistency of a wet sponge.” There were no underlying support pilings or infrastructure.

By 1980, the pool, under the weight of 7 million gallons of water, had sunk 12 inches. The solution, to pour a new concrete floor, only increased the weight and worsened the problem. By 1986, cracks and leaks allowed a loss of 500,000 gallons of water a week. After a two decade battle for funding, a two year $34 million renovation in 2010 drove 2,113 40-

foot timber pilings into the marshy clay floor, allowing a celebratory August 31, 2012 reopening.

If a nightmare for the the architects and engineers, it was a dream site for visitors, declared by the Parks Service to be “the most photographed civic stage in America.” When “times of trouble” have appeared on our horizon, we Americans gravitate to this spot, as flawed as it might be. We came in numbers, 250,000 strong, on August 28,1963 to hear Martin Luther King Jr. voice “I Have a Dream.” Jenny and Forrest felt a similar draw three decades later.

Of course, one man’s dream is another man’s nightmare. Turns out, one of Trump’s great ideas was to give the Lincoln Memorial Reflecting Pool a remake just in time for his “best ever” 250th Birthday Celebration coming up. Seeing it. Feeling it. He acted on the impulse to change it to “American Flag blue.” (His original choice was Bermuda turquoise.)

This no doubt caused turn of the century architect Henry Bacon to roll over in his grave. Bacon’s well-documented original intent in selecting a “plain asphalt and tile bottom” was to create a mirror effect to reflect the marble Lincoln Memorial. The National Park Service, in their redo in 2012, chose grey for the floor to make it even more reflective.

As with this iconic national treasure, our democracy itself struggles these days to uncover strength and ethical moorings in shifting muddy grounds. It’s a lot.

Sam Sifton, host of the New York Times “The Morning,” said as much this week when he wrote, “Those problems may come as no surprise to anyone who owns a pool. Think of the upkeep and the ongoing costs: chemicals, pumps, cleaning, draining, covering, uncovering, filling, filtering, repeat. Something’s always going wrong. I have a friend with a pool. (Beats having a pool.) I asked him about it. He was forceful: ‘It’s a lot.’”

My pool here in Connecticut is about to be opened next week. And I’ve picked up the chemicals, readied the cleaning robot, and power washed the deck in preparation. The unknown is whether the ancient liner will last one more year or split open and demand replacement. We’ll see. And so it goes with Donald Trump.

Our pool and we are aging in tandem. It doesn’t get much use compared to years ago. But we’ll have a crowd once again for my wife’s “Almost 80 party” in late June. So I’m under a bit of pressure this year. But nothing like they’re going through in Washington.

Outwitting HIV

Posted on | May 21, 2026 | Comments Off on Outwitting HIV

Mike Magee

In a 1996 JAMA editorial Nobel Laureate Joshua Lederberg MD wrote “Our fight with microbes is far from over …odds are tipped in their favor…they outnumber us a billion fold, and mutate a billion times more quickly…pitted against microbial genes, we humans mainly have our wits.”

Now three decades later, our scientists remain in a “battle of wits “ with this amazing viral foe, but even without a vaccine, have maintained a slide edge for humanity. Experts recently confirmed that we are unlikely to have a vaccine bullet by 2030. And it’s not because we haven’t tried. There have been more than 250 official HIV vaccine trials, with fewer than 10 making it past the safety threshold to test efficacy – and the best performer only had a moderate success rate in triggering some immunity in 31%.

HIV is just a bad actor according to Professor Anna Durbin at the Bloomberg School of Public Health at Johns Hopkins. . To start with, it embeds its chemistry in the host’s DNA genome, blurring the boundaries between “self” and “non-self.” Most of our successful vaccines focus in on a protein portion of the virus envelop or capsule. But the HIV virus has a “glycan shield” – a protein envelope that incorporates around 95 different sugar molecules which shield or disguise the viral protein from detection by our immune system. As one expert described it, “The immune system’s antibodies approach the virus and effectively see a blurry cloud of sugars rather than the vulnerable protein underneath.”

The second problem is the virus’s “sloppy gene duplication” is riddles with mutations. This yields dozens of different versions each with endless subtype variations. This is not typical disciplined viral behavior. Today’s measles virual genome for example is nearly identical to its late 20th century version.

And finally, HIV’s favorite target for invasion is the CD4 lymphocyte, otherwise known as the “Helper T-cell.” That happens to be the cellular key that unlocks our entire immune apparatus. This virus effectively decapitates the lead generals of our defensive force. And yet, we’re gaining on the virus. How have we done it?

First, by focusing on two “work-arounds” that trigger “passive immunity” without the help of our own immune machinery. Three decades ago, breakthrough discoveries first offered a glimmer of hope in the form of antiretroviral medications. With a variety of different combined therapy approaches, HIV/AIDS emerged as “no longer a death sentence,” but a chronic disease, like diabetes, that could be managed. In the modern era, this effective approach has spawned PrEP, or “Pre-exposure Prophylaxis,” – a preventive regimen for HIV negative individuals who are at risk of contracting HIV.

This regimen, generally combining the two anti-HIV meds, tenofovir and emtricitabine, prevents HIV replication if an individual is exposed to the virus. This cut transmission through sexual contact by 99%, and from illicit dug injection by 74%. The challenge has been access – especially in under-developed countries. But last month, Gilead Pharmaceuticals, teaming up with The Global Fund and PEPFAR (President’s Emergency Plan for AIDS Relief) agreed to provide their new antiretroviral drug, lenacapavir (LEN) at cost. In trials, the drug was 99% effective in keeping individuals HIV negative. As important, it is a twice a year injectable that could make a world of difference in developing nations, especially when it comes to transmission of the virus from HIV+ mothers to newborns through pregnancy and breastfeeding.

Scientists have known for sometime that this population is key to combating HIV/AIDS. The chances of a newborn contracting HIV from an infected mother are 1 in 2. Contrast that with unprotected sex (1 in 72) and IV drug use (1 in 158), and it was clear to policy makers where to focus. Three decades ago, 1 in 4 infants born in Uganda were HIV+. That translated into 32,000 HIV infected children per year. Today it is less than 5000. How? 1) All expectant parents are HIV tested. 2) If positive, they receive anti-retroviral meds.

The latest WHO stats show progress is indeed possible:

“At the end of 2024, 77% of people living with HIV were accessing antiretroviral therapy, up from 24% in 2010. Globally, there were 1.1 million pregnant women with HIV in 2024, of which an estimated 84% received antiretroviral drugs to prevent mother-to-child transmission. At the end of 2024, there were 1.4 million children aged 0–14 years living with HIV globally, down from 2.7 million in 2010.” Clearly there is still work to be done. One in six pregnant women with HIV is still not under treatment.

The second “work-around” is equally promising. It is what the NIH has labeled a “passive immunization strategy” – monoclonal antibodies. Research in animals, dating back to 2014, found that animals with long-standing HIV sometimes develop “broadly neutralizing antibodies” that effectively stop a whole range of different genetic subtypes of HIV. A decade later, synthetic engineered copies of these natural antibodies are being tested. Challenges remain, including the need for continued infusions, perhaps every six months, to keep formally HIV+ individuals in “permanent remission.”

A summary report in Smithsonian magazine six months ago stated, “This year, researchers reported a breakthrough that suggests a ‘functional’ cure for HIV—a way to keep the virus under control long-term, without constant treatment—may indeed be possible. In two independent trials using infusions of engineered antibodies, some participants remained healthy without taking antiretrovirals, long after the interventions ended.”

The final word goes to Johns Hopkins Bloomberg School of Public Health’s Morgan Coulson, who recently wrote, “The history of HIV vaccine research is a long record of promising ideas that didn’t translate into protection in large trials. What makes the current moment different is that researchers have, for the first time, demonstrated they can deliberately guide the human immune system toward producing the kind of antibodies known to neutralize HIV broadly. Whether that initial success can be built into full protection is the central question for the next decade of research.”

Tags: AIDS > Anna Durbin > Bloomberg School of Public Health > HIV > HIV transmission in pregnancy > Johns Hopkins > Joshua lederberg > Morgan Coulson > passive immunity > PrEP > Uganda > WHO

Hantavirus – Population Vulnerability, Transmissability, & Virulence

Posted on | May 12, 2026 | 2 Comments

Note: There was a prior outbreak of the Andes variant Hantavirus reported in the New England Journal of Medicine 6 years ago.

Mike Magee

In 1948, General George Marshall, reflecting on the success of the World War II vaccine program, famously stated “We now have the means to eradicate infectious disease.” A frequent but untrue urban legend attributed incorrectly to U.S. Surgeon General William H. Stewart nearly two decades later was that it was time to “close the book on infectious diseases.”

The “end of scientific hubris” would be quietly declared twenty-two years later, on June 5, 1981, on page two of the CDC’s MMWR publication with the headline “Pneumocystis Pneumonia – Los Angeles.” Wrestled to the ground, but still with no definitive cure for HIV/AIDS, Covid-19 would arrive on our shores in 2019 and ultimately claim 1.3 million American lives (and 7.1 million worldwide), and it could have been much worse had mRNA vaccines not arrived in the nick of time.

The Institute of Medicine in 1992 took a broad view of the worldwide threat with its publication of “Emerging and Reemerging Infections.” Minnesota Epidemiologist Dr. Michael Osterholm caught the spirit of that publication in his testimony to Congress on May 31, 1996 when he stated, “ I am here to bring you the sobering and unfortunate news that our ability to detect and monitor infectious disease threats to health in this country is in serious jeopardy.”

That same year, Nobel Laureate Joshua Lederberg MD, in a JAMA editorial wrote, “Our fight with microbes is far from over …odds are tipped in their favor…they outnumber us a billion fold, and mutate a billion times more quickly…pitted against microbial genes, we humans mainly have out wits.”

That’s not very encouraging when you consider the fact that our current President, on April 23, 2020, mused in an official coronavirus televised briefing, that somehow internalizing disinfecting bleach might resolve the infection. Now six years later, he has single-handedly dismantled the scientific research and public health leadership of our nation, and left the top post in the hands of our most notorious vaccine denier, RFK Jr.

So here we are, at the front end of a now familiar story. There’s an outbreak in a distant site. Some people have died, rather suddenly. Evacuations and surveillance have occurred. Information released is contradictory and confusing. The President (thankfully) is distracted for the moment by plans for a Ballroom and Reflecting Pool. And alarms are just beginning to be sounded.

What do we know about Hantavirus, or more specifically about the Andes variant of Hantavirus?

Let’s set the stage. There are three measures that matter when it comes to assessing epidemic risk from a microorganism – 1) Population Vulnerability, 2) Transmissibility of the organism, 3) Virulence. Consider the current Measles epidemic in the U.S. Formerly largely eradicated, RFK Jr. and his allies have managed to ignite a modern day epidemic by encouraging families to avoid accessing the highly effective measles vaccine. In so doing, they have created pockets of citizens who have never been exposed to either the active organism or elements of it in the constructed and harmless vaccine. These pockets are not immune to the infectious agent, and without herd immunity, are highly vulnerable.

Secondly, it turns out Measles is one of the most transmissible pathogens that exists. On average, one infected individual within an unprotected human population, will spread the disease to 16 others. Finally, and mercifully, Measles causes a great deal of morbidity, but relatively little mortality. It is fatal in roughly 1.3% of those who are infected.

Now for comparison, let’s consider the Andes version of Hantavirus (ANDV Hantavirus). First, it bears repeating, while there are many versions of the Hantavirus, largely spread by contact with rodent feces and historically not a huge threat to humans, that is absolutely not the case with the Andes version. Through mutations, it has acquired the capacity to spread by aerosolization from one infected human to another.

As for vulnerable populations, except in small geographic centers such as the Andes region of Argentina, the vast majorities of humankind has never been exposed to the Andes version of Hantavirus. This suggests massive potential population vulnerability. There is no vaccine currently, but scientists are already at work developing one.

As for transmissibility, historically infected individuals in the past on average passed the organism on to two others. But this likely does not apply to the Andes mutant. In the recent cruise outbreak, among 150 voyagers, 11 are known to have contracted the disease, and 3 died (27%).

Finally, when it comes to virulence, the Andes Hantavirus is quite deadly. Since surveillance began in the U.S. in 1993, there have been 890 cases, mostly in the southwest and mainly involving a different variant, the Sin Nombre Hantavirus. The fatality rate for this group was 35%. European outbreaks with less virulent variants have had fatality rates below 15%.

Where are we now? We do not know for certain. The virus can take six, and even eight weeks, to become symptomatic. The W.H.O. has taken the lead in surveillance and follow-up. The MV Hondius carried 140 passengers and crew, now dispersed across the globe. Two days ago a French woman was hospitalized at Bichat Hospital in Paris, and became the 11th traveler diagnosed with the illness. The W.H.O. says more cases are to be expected. The 32 ship crew members remain quarantined on the ship until it docks in the Netherlands. 18 Americans remain under surveillance at a secure medical facility with 16 in Omaha, Nebraska and 2 in Atlanta, Georgia. One has tested positive with mild symptoms.

Eight years ago, in 2018, there was an outbreak of the Andes variant hantavirus in the village of Epuyen, Argentina. The official report read, “On November 3, 2018, the man attended a birthday party for 90 minutes along with around 100 other people in the village in Argentina’s Chubut Province, near the Chilean border.” 34 were infected and 11 died (32%). The fact that the deaths happened rapidly paradoxically limited the infection’s wider spread. Of the 80 health care workers who managed these illnesses, none contracted the disease.

A thorough epidemiologic investigation of that event was published two years later in the New England Journal of Medicine. Here were their summary observations at the time.

- “The super-spreading capability of the ANDV Epuyén/18−19 strain shows a facility for sustaining continuous chains of transmission if no control measures are enforced.”

- “The person-to-person transmission calls for a careful evaluation of the epidemic potential and a biologic risk assessment of ANDV strains and viruses.”

- “The absence of evidence for ANDV adaptation within or between hosts or for differences in viral diversity between spreaders and nonspreaders indicates that permissive ecology and social factors have a more substantial influence than genetic changes in sustaining person-to-person transmission in human hosts.”

- (Compared to a strain 22 years earlier) “few genomic mutations were identified among the strains involved in the outbreak that were transmitted from person to person.”

Appreciating our prior performance with Covid, the life saving and timely appearance of mRNA vaccines, and two person to person super-spreader outbreaks in 8 years, our researchers need to have their eyes on the ball. Specifically they should:

- Compare the current ANDV hantavirus genome to the 2018 and 1996 variations and publish the genomes and their variants.

- Create an mRNA vaccine for the 2026 ANDV hantavirus.

- Institute mandatory screening for 2026 ANDV hantavirus for all patients admitted to U.S. hospitals with acute severe respiratory infections.

Tags: 2018 outbreak hantavirus > 2026 outbreak hantavius > Andes variant of hantavirus > ANDV > Argentina > Joshua lederberg > michael osterholm > NEJM review of 2018 ANDV outbreak

AI Assisted Measurement of The Thymus – The “Fountain of Youth?”

Posted on | May 5, 2026 | Comments Off on AI Assisted Measurement of The Thymus – The “Fountain of Youth?”

Mike Magee

For the past eight Springs, my calendar in May has included a 90 minute lecture for the Presidents College at the University of Hartford. Both the commitment and the topic have been chosen 9 months earlier. The long lead time allows enough space to develop the in-depth research and slide deck to support topics that are often new to me. For example, this years lecture is titled “Why Immunology Could Revolutionize How We Fight Disease” and covers two centuries of discoveries culminating in the 2025 presentation of the Nobel Prize to Mary E. Brunkow, the 28th member of the American Association of Immunologists to receive the honor for her elucidation of the role of Regulatory T-cells (TREC).

The May lecture provides a baseline for a more expansive 3-session presentation of the topic in the Fall. Between Spring and Fall, the topic, its’ visuals and insights, facts and figures, expand and (hopefully) refine the narrative. So over the years, I have grown accustomed to maintaining an “eyes wide open” approach throughout the summer, for new content to appear that deserves inclusion in the Fall course.

A prime example landed on my desk two weeks ago in the form of a Nature article titled “Thymic health consequences in adults.” The article’s significance was rapidly broadcast by a range of popular science publications like Scientific American. Its March 18th headline read “This overlooked organ may be more vital for longevity than scientists realized.” It dealt with a bias I had encountered in working up the topic, that the thymus devolved over a life time, giving up its role as the creator of a repertoire of disease and cancer fighting T-cells which somehow became peripherally dispersed and almost self-guiding as independent players in our adaptive immunity system.

My final slide for next weeks lecture is both an excuse and a teaser. It acknowledges the many topics we have not covered, but also implicitly promises that our three session program in the Fall will deliver new material in these eight areas. As you can see, the last entry (#8 on my list) is “Immunosenescence (Aging).” My own notes on the topic had already referenced that the thymus shrinks with age along with its production of T-lymphocytes of varying types. I also had captured that this decline might suggest why “inflammaging” (increased levels of measurable inflammation) increase as we age, as well as the theory that a decrease in T regulatory lymphocytes might cause some blurring of the line between recognition of “self” versus “non-self,” and in so doing leave our immunologic defenses down when it came to cancer cell irradication as we aged.

The ground breaking reporting in response to the Nature article of course went much farther. Mass General publications trumpeted, “Long Dismissed in Adult Health, the Thymus May Be Critical for Longevity and Cancer Treatment,” and global outlets expanded with “Once dismissed as biologically obsolete after adolescence, the thymus is now being reclassified as a central regulator of immune aging, with new evidence linking its health to survival, cancer resistance, and how the human body ages itself.”

In their own Abstract, the authors of the Nature publication were somewhat more reserved, and yet the message is still remarkably consequential. They write, “These findings reposition the thymus as a central regulator of immune-mediated ageing and disease susceptibility in adulthood, highlighting its potential as a target for preventive and regenerative strategies to promote healthy ageing and longevity.”

Two Springs ago, at this time, I was putting the finishing touches on my Presidents College 2024 address titled “AI and Medicine.” Twenty four months later, I remain ever alert, having covered many additional breakthroughs, and the self-accelerating learnings of generative AI, and in Medicine especially. So not surprisingly what caught my eye in the case above was hardly even mentioned by reviewers who were so excited by the primary clinical findings.

My question was, “How did they measure thymic functionality?” The short answer was, they measured it with the help of AI deep learning .

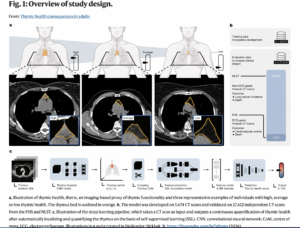

As the authors explained, “In this study, we investigated the impact of thymic functionality, here called thymic health, in adults… For quantification of thymic health, we developed a deep learning system using an independent dataset of 5,674 individuals to determine compositional radiographic characteristics of the thymus as a proxy for its functionality. The system takes a CT scan as input and provides the automatic continuous thymic health estimate as output.”

“We applied the system to prospectively collected data from a total of 27,612 individuals from two cohorts, including 2,581 participants in the FHS and 25,031 participants in the NLST (Fig. 1)… For outcome analyses, participants were categorized as low, average or high thymic health based on the bottom 25%, middle 50% and top 25% of the population.”

The capacity to demonstrate different levels of thymic functionality turned out to be groundbreaking when cross-referenced against decades long longitudinal databases. Association with cardiovascular disease and lung cancer; history of smoking, obesity, high HDL levels; disabilities, morality and mortality all reinforced that prolonged functionality of the thymus correlated with both health and longevity.

Now the authors theories could be proven. For example they stated, “As expected thymic health was higher in female than male participants and significantly declined with age.” But more than that, the authors dug deep into associations “between metabolic and thymic health,” and concluded that … these findings suggest a profound impact of actionable lifestyle choices on thymic health and may further clarify why healthy behaviour improves well-being and lifespan.”

Finally, their calculations using multiple chemical markers for inflammation, suggested that “lower thymic health was indeed associated with pro-inflammatory modifications of blood plasma protein levels, consistent with the presence of chronic inflammation. The pro-inflammatory pattern included increased levels of cytokines IL-6, IL-18 and OSM, as well as several CXCL chemokines, all of known relevance in systemic inflammatory diseases such as atherosclerosis, age-associated diseases such as arthritis, and cancer.”

In its final summary, the authors reach for the golden ring stating, “this study underscores the highly personalized nature of thymic health and emphasizes the previously unrecognized possible critical role of maintaining thymic health to preserve an agile, adaptive immune response that will accommodate long-term well-being and longevity.”

And it is understandable that they would end on such a “good news” clinical note. But we should care not to bury the lead here: Generative AI, in assisting researchers in measuring what had previously been unmeasurable, is about to reset what is “possible” in pursuing health and longevity.

Tags: aging > AI > Generative AI > immunosenescence > Medicine Research > Nature > thymus

Happy Spring to All

Posted on | April 26, 2026 | 1 Comment

Mike Magee

As Winter gives way to Spring, I am reminded of what J. R. R. Tolkien famously wrote in a poem that appears in the first volume of The Lord of the Rings trilogy in 1954 (and Bilbo Baggins sings as part of the song “I Sit Beside the Fire and Sing” a half century later), “For still there are so many things that I have never seen: in every wood in every spring there is a different green.” Happy Spring to all.

https://lnkd.in/eSPBv587

Microchimerism – What’s in a word?

Posted on | April 24, 2026 | Comments Off on Microchimerism – What’s in a word?

Mike Magee

According to some estimates, a medical student learns upwards of 15,000 new words during the four years of training. But the scope of the language challenge extends well beyond these tightly conscripted years since discoveries, and accompanying language to describe and label those new findings, accumulate throughout the entire span on one’s professional lifetime. And that was before AI which will spawn a world of new discoveries and terminology.

One word that has risen in prominence with the arrival of this new century is microchimerism.

“Microchimerism is the presence of cells from one individual in another genetically distinct individual.”

A 2019 review laid out the basics:

- “Pregnancy is the main cause of natural microchimerism through transplacental bi-directional cell trafficking between mother and fetus.”

- “Furthermore, it is now known that microchimerism persists decades later both in mother and in her progeny.”

- “The consequences of pregnancy-related microchimerism are under active investigation.”

The most common cause of microchimerism is pregnancy. By 4 weeks of gestation fetal cells can be detected in the mother’s circulatory system even after stillbirth or miscarriage. The reverse is true as well. Maternal cells can be detected in newborns circulation and have been found embedded in baby’s skin, thymus, spleen, liver and thyroid.

The term first appeared in the medical literature in the 1970’s. It was intended to suggest both an unusual occurrence and an unlikely biologic mixture of living elements. “Chimera” is a mythological term associated with monstrous imagery – a creature composed of portions of multiple animals. In Greek mythology, the chimera breathes fire and possesses the body of a lion, a goat’s head arising from its back, and a snake’s head for a tail.

The description above of “cell trafficking” may seem a bit overwrought, but the modern day reality is that this phenomenon, and several other observations have triggered a radical reconsideration of the formerly rock-solid cornerstone of the field of Immunology – the capacity on a cellular and molecular level to distinguish “self” from “non-self.”

As one expert stated recently, “Numerous discoveries have focused attention on how immune responses are finely tuned by a range of contextual cues, including tissue signals, hygienist theory, molecular mimicry, symbiotic microbes, metabolic factors and epigenetic modifications… microchimeric cells in adults demonstrate that genetically foreign cells can be actively integrated into the host, challenging the simple assumption that ‘foreign’ equals unconditional attack… foreign elements might be tolerated (commensal bacteria, fetal cells, integrated viruses) or self-components might provoke aggression (autoimmunity, tumor immunity)…illustrating the remarkable plasticity of the immune system. The immune system is less a policeman patrolling a hard border than a manager of ongoing negotiations between host biology and environmental influences.”

On the leading edge of the field, “self” is no longer static, but “evolutionary” instead. In this brave new world, the vast collection of microorganisms that make up the microbiome are symbiotic, helpful, and actively evolving side by side with their “more human” cellular counterparts. Beyond the boundaries of a skin-deep innate immune system, the ecological determinants of health and illness are actively asking our systematic immune regulators to acknowledge, respond and adjust to a range of epigenetic determinants.

We’ve come a long way since Paul Ehrlich published his 1906 “General review of recent work in Immunity.” His publication at the time was a radical departure from philosopher John Locke’s 1690 “An Essay Concerning Human Understanding” exploring human memory and consciousness, reaching beyond the material boundaries of the human body. Biologic terms of human identity were now up for grabs.

Over the 20th century, the field grew side-by-side with modern understanding of infectious diseases, immunity, anaphylaxis, organ transplantation, rejection and more. The questions that were cued up, and largely addressed one by one, were probing – even disturbing.

- “How does the body fight and remember certain pathogens?”

- “What are the molecular complexes that mark ‘selfhood?’ Do all lifeforms possess similar molecular tags? Are mine different from your’s, from even a twin?”

- “How is the system regulated so that it doesn’t over-react and attack itself as it appears to do with autoimmune diseases?”

- “If the body is host to trillions of microbes, many essential for normal physiology, does the immune system categorize them as foreign? Are they included in the extended sense of self?”

As the 21st century dawned, there seemed to be more questions than answers. But most immunologists believe that our human immune systems share three important characteristics: specificity, memory, and tolerance. Experts agree that these elements provide “intellectual scaffolding for the notion that ‘normal’ immunity was based on recognizing foreign antigens while ignoring the self.”

In recent years, if anything, exploration outside accepted norms for Immunology has only grown. Microchimerism, microbiomes, molecular mimicry enabling destructive autoimmune diseases like MS, all suggest that “self” is a moving target with remarkably porous boundaries.

But the field of Immunology is expanding far and wide. Here are three examples:

- Immunosenesence: The phenomenon of aging has attracted immunologists. “Immuno-senescence become evident after mid-life, as thymic involution restricts the output of naive T cells, and cumulative inflammatory signals, sometimes referred to as ‘inflammaging’, begin to erode the boundary that once reliably separated self from non-self. In advanced age, weaker pathogen defenses coexist with a paradoxical rise in auto-reactive phenomena, reflecting an overall decline in regulatory stringency.”

- Immunotherapy: Therapeutics are now front and central in the field. One remarkable example of success, with 5-year survival rates exceeding 50%, is metastatic melanoma. An oncologist at Sloan Kettering explains that “Immunotherapy works by stimulating the immune system to identify and destroy melanoma cells. Melanoma cells can evade the immune response by exploiting certain proteins known as checkpoints. Blocking these immune defense cells allows a direct attack on the cancer cells.”

- Neuro-immune Cross Talk: “A mutual expression of molecules from both domains (Immunology and Neurology) highlights a shared ‘cognitive’ capacity: the nervous and immune systems each interpret a vast array of molecular signals, be they hormonal, neurotransmitter, or pathogen-associated cues and generate responses that maintain organismal homeostasis. In effect, the immune system becomes akin to a sensory organ, attuned not solely to microbial invaders but also to internal physiological shifts, while the nervous system extends beyond classical neurotransmission to engage in immuno-modulatory functions.”

If “microchimerism” is the word of the decade, what word will come next? Here’s a prediction.

“Holobiont is defined as a biological system consisting of a host and its symbionts, which engage in continuous exchange of information and genetic material, leading to the development of a metabolome and hologenome that adapt and persist in response to environmental factors.”

Tags: holobiont > immunology > medical stdent vocabulary > microchimerism > non-self > self

Are You Ready For The Convergence of Metaphysics, Immunology, and Epigenetics?

Posted on | April 16, 2026 | 2 Comments

Mike Magee

Stanford neuroscientist, David Eagleman, reminded us this week that “A coherent explanation of consciousness eludes modern science.” That was his opening line in the New York Times book review of Michael Pollan’s latest effort, “A World Appears.” In it, Pollan asks innocently, “How does the brain generate a unified sense of self?”

According to Eagleman, “Pollan is not able to furnish the answers (no one can, yet), but he presents a captivating exploration, one that is highly personal and sensitive.” In this, he is not alone. Other fields are engaged in the same pursuit.

To begin with, there are the epigeneticists. They study “how our environment influences our genes by changing the chemicals attached to them.” In the hands of these scientists, genes are not “set in stone and (fully) predetermined.” Of late, these investigators have been unraveling how various chemicals, working on the surface and inside cells are constantly altering and adjusting how our genes work. Thus the title, since “epi” is Greek for “over, outside of, around.”

Other investigators like Professor Eddy Keming Chen in the department of Philosophy at University of California San Diego come at the problem from a different direction. She bolstered her PhD in Philosophy with a Masters in Mathematical Physics, and a graduate certificate in Cognitive Science. She teaches the PHIL 130 course on Metaphysics.

In the UCSD college syllabus, she tees up the question, “Why study metaphysics?” She promises enrollees that if they sign up, they’ll find a bit of magic in exploring tough questions, like: “Do we have free will? Is it compatible with causal determinism? What is the place of the mind and of the consciousness in a physical world?”

In the Jesuit world that I came from, such courses were mandatory as part of the core curriculum. In my own alma mater, they no longer carry the same mandate, but still remain alive and well.

Consider, for example PHL 365 – a 3 credit course at LeMoyne College titled Philosophy of Mind. Once again, there is magic in the air for inquiring minds. Here is a description. “The main focus of the course will be the ‘mind-body problem’: can the existence of minds and mental states be reconciled with a thoroughly materialistic or physical view of the world? A second, closely connected focus will be: can mental states be implemented on a computer?”

Finally, if neither of these fields captures your imagination, you could follow the lead of Dr. Marie Duhamel, a member of the Board of Directors of the French Society of Proteomics, and research immunologist at the University of Lille. Her 2025 publication in Frontiers in Immunology, titled “Self or non self: end of a dogma?” is an epic exploration of the historical foundations of immunology, and begins this way, “The question of what constitutes the ‘self’ and how living organisms maintain their integrity against external threats has preoccupied thinkers from diverse fields, including philosophy, biology and medicine, for centuries.”

Reviewing more than a century of research that began with the birth of Immunology as a discipline, Dr. Duhamel and her co-author Professor Michel Salzet, are forced to acknowledge that prior assumptions were not entirely incorrect but represent only a portion of the truth. In their words, “Conceptually, the entire premise that the immune system’s first job is to define what is self so as not to attack it is contradicted when we consider microchimerism and pregnancy tolerance, cases in which truly foreign (paternally derived) tissues persist without triggering rejection. Similarly, the fact that the human microbiome can be vital to normal function challenges the assumption that foreignness inevitably triggers aggression.”

Where then does the truth lie? According to the authors, “The role of the immune system is to manage complex ecological relationships by distinguishing beneficial or neutral foreign entities from harmful ones. The presence of ‘harmless foreign’ elements is a mainstay in the gut, skin, and oropharynx. Moreover, the integration of viruses into the genome, sometimes with evolutionary and developmental benefits, blurs the boundary between self and foreign in a fundamental, genomic sense. Endogenous retroviral elements constitute a significant portion of human DNA, yet no robust immune aggression is mounted against these deeply embedded viral sequences. This phenomenon invites researchers to conceive of ‘self’ as including certain categories of foreign genetic material that have become symbiotic or neutral over evolutionary time.”

Before they finish, the scientists humble themselves by allowing boundaries to blur as they move freely into philosophic uncharted territory. The “magic “ is in full view, as they continue: “These concepts are consistent with the contemporary philosophy of immunology, which incorporates ecological and developmental insights, such as the observation that commensal microbes, fetal cells in the maternal circulation, or latent viruses are not automatically rejected as “non-self,” but instead coexist with the host under specific regulatory conditions.

Regardless of which road you travel, a common destination is beginning to appear on the horizon. The convergence of disciplines – Metaphysics, Immunology, Epigenetics – is no longer competitive but rather complimentary. The remaining question: Are we as a species ready for this? Can we handle the truth?

Michael Pollan obviously thinks we are. His website asks the reader to travel “the cutting edge of the field, where scientists are entertaining more radical (and less materialist) theories of consciousness. A World Appears introduces us to “plant neurobiologists” searching for the first flicker of consciousness in plants; scientists striving to engineer feelings into AI, and psychologists and novelists seeking to capture the felt experience of our slippery stream of consciousness.”

The epigeneticists are cautiously optimistic. In their words, “There’s a lot we don’t know. But that means there’s much left to discover.” But for the immunologists, with the promise of new treatments for cancer and aging, it’s full speed ahead. Their final words, “If this means embracing the ‘end of a dogma,’ it also heralds the dawn of a more integrative immunological science.’ “

Tags: a world appears > david eagleman > eddy keming chen > epigenetics > immunology > marie duhamel > metaphysics > michael Pollan > michel Salzet > non-self > self