“Justice delayed is justice denied . . . but not forever.”

Posted on | May 29, 2024 | Comments Off on “Justice delayed is justice denied . . . but not forever.”

Mike Magee

The date was March 16, 1868. The speaker was a British statesman and former Prime Minister, William Evert Gladstone. In a dispute that day, during vigorous debate in Parliament, Gladstone, in response to supportive cheers from colleagues said , “But above all, if we be just men, we shall go forward in the name of truth and right, and bear this in mind, that when the case is ripe and the hour has come, justice delayed is justice denied.”

These words ring loud and clear this week for millions of Americans, as they await a verdict in the criminal trial of Donald Trump in New York. Whatever one’s political persuasion, most agree that the presiding judge, Juan Merchan, has been highly competent and efficient in orchestrating the historic trial and avoiding over-reacting to Trump’s labeling the judge “corrupt” and “conflicted.”

Judge Merchant has been a Justice of the New York Supreme Court since 2009. An experienced and balanced jurist, he has earned the respect of defense attorneys like New York lawyer Ron Kuby who described him as “a serious jurist, smart and even tempered…a no-nonsense judge…always in control of the courtroom.”

Contrast this with the evaluations of Judge Aileen M. Cannon who recently issued a “curious order” indefinitely delaying a date for Tump’s classified document case after holding seven public hearings during the past 10 months. Her performance has caused legal analyst Alan Feuer, who has covered criminal justice for the New York Times since 1999, to comment, “The portrait that has emerged so far is that of an industrious but inexperienced and often insecure judge whose reluctance to rule decisively even on minor matters has permitted one of the country’s most important criminal cases to become bogged down in a logjam of unresolved issues.”

Needless to say, the contrast between the two judges is stark. The only thing they seem to have in common is that both were born in Columbia. Personalities and loyalties aside, each will forever be tied to Trump, and their ability to deliver or delay justice. This is because the majority view of Americans is that “fairness” and the “rule of law” require “timely and efficient justice systems.”

What friends of Judge Cannon must consider is whether “justice delayed or disrupted on one dimension may still find its resolution in another.” That is to say, her actions one way or another, have consequences, and could send the Trump narrative down any number of alleys that place the former President (and his family members) in even greater jeopardy. Witness for example the life journey of O.J. Simpson following his acquittal for the murder of Nicole Brown Simpson. Or Harvey Weinstein, freed on a technicality, only to be retrialed as more of his victims surface.

Purposeful delays in justice can boomerang on defendants. For example, the former President succeeded in stonewalling release of his tax returns for over a decade. But time ran out in 2024 when the IRS announced that he had “claimed massive financial losses twice” (in 2008 and 2012) and owed the government over $100 million. And that was just on a single holding – a Chicago skyscraper. Then there was the 2023 battery and defamation conviction for the 1990’s rape of E. Jean Carroll. The $5 million dollar judgement against him took nearly three decades to snare him in justice’s web. And in that same year, New York attorney general Letitia James convinced Justice Arthur Engoron that the Trump Organization was guilty of massive real estate fraud, and effectively revoked the license to operate New York based properties.

What we as a society are currently witnessing is that timely resolutions are central to maintaining “public trust.” People must move on with their lives, and can only do so if social order and public confidence in justice is maintained. Trump’s success with a strategy of delay seems to be waning. The current trials remind us that continuous improvement will always be the order of the day for our legal system. Access, efficiency, equity, and timely justice for all.

And should he somehow wiggle his way out of the current downtown New York holding cell, he and his protectors will soon enough learn that “Justice delayed is justice denied . . . but not forever.”

Tags: aileen cannon > arthur engoron > e. jean carroll > juan merchant > justice > o.j.simpson > trials > trump

AI Can Talk, But Can It Think?

Posted on | May 22, 2024 | Comments Off on AI Can Talk, But Can It Think?

Mike Magee

OpenAI says its new GPT-4o is “a step towards much more natural human-computer interaction,” and is capable of responding to your inquiry “with an average 320 millisecond (delay) which is similar to a human response time.” So it can speak human, but can it think human?

The “concept of cognition” has been a scholarly football for the past two decades, centered primarily on “Darwin’s claim that other species share the same ‘mental powers’ as humans, but to different degrees.” But how about genAI powered machines? Do they think?

The first academician to attempt to define the word “cognition” was Ulric Neisser in the first ever textbook of cognitive psychology in 1967. He wrote that “the term ‘cognition’ refers to all the processes by which the sensory input is transformed, reduced, elaborated, stored, recovered, and used. It is concerned with these processes even when they operate in the absence of relevant stimulation…”

The word cognition is derived from “Latin cognoscere ‘to get to know, recognize,’ from assimilated form of com ‘together’ + gnoscere ‘to know’ …”

Knowledge and recognition would not seem to be highly charged terms. And yet, in the years following Neisser’s publication there has been a progressively intense, and sometimes heated debate between psychologists and neuroscientists over the definition of cognition.

The focal point of the disagreement has (until recently) revolved around whether the behaviors observed in non-human species are “cognitive” in the human sense of the word. The discourse in recent years had bled over into the fringes to include the belief by some that plants “think” even though they are not in possession of a nervous system, or the belief that ants communicating with each other in a colony are an example of “distributed cognition.”

What scholars in the field do seem to agree on is that no suitable definition for cognition exists that will satisfy all. But most agree that the term encompasses “thinking, reasoning, perceiving, imagining, and remembering.” Tim Bayne PhD, a Melbourne based professor of Philosophy adds to this that these various qualities must be able to be “systematically recombined with each other,” and not be simply triggered by some provocative stimulus.

Allen Newell PhD, a professor of computer science at Carnegie Mellon, sought to bridge the gap between human and machine when it came to cognition when he published a paper in 1958 that proposed “a description of a theory of problem-solving in terms of information processes amenable for use in a digital computer.”

Machines have a leg up in the company of some evolutionary biologists who believe that true cognition involves acquiring new information from various sources and combining it in new and unique ways.

Developmental psychologists carry their own unique insights from observing and studying the evolution of cognition in young children. What exactly is evolving in their young minds, and how does it differ, but eventual lead to adult cognition? And what about the explosion of screen time?

Pediatric researchers, confronted with AI obsessed youngsters and worried parents are coming at it from the opposite direction. With 95% of 13 to 17 year olds now using social media platforms, machines are a developmental force, according to the American Academy of Child and Adolescent Psychiatry. The machine has risen in status and influence from a side line assistant coach to an on-field teammate.

Scholars admit “It is unclear at what point a child may be developmentally ready to engage with these machines.” At the same time, they are forced to admit that the technology tidal waves leave few alternatives. “Conversely, it is likely that completely shielding children from these technologies may stunt their readiness for a technological world.”

Bence P Ölveczky, an evolutionary biologist from Harvard, is pretty certain what cognition is and is not. He says it “requires learning; isn’t a reflex; depends on internally generated brain dynamics; needs access to stored models and relationships; and relies on spatial maps.”

Thomas Suddendorf PhD, a research psychologist from New Zealand, who specializes in early childhood and animal cognition, takes a more fluid and nuanced approach. He says, “Cognitive psychology distinguishes intentional and unintentional, conscious and unconscious, effortful and automatic, slow and fast processes (for example), and humans deploy these in diverse domains from foresight to communication, and from theory-of-mind to morality.”

Perhaps the last word on this should go to Descartes. He believed that humans mastery of thoughts and feelings separated them from animals which he considered to be “mere machines.”

Were he with us today, and witnessing generative AI’s insatiable appetite for data, its’ hidden recesses of learning, the speed and power of its insurgency, and human uncertainty how to turn the thing off, perhaps his judgement of these machines would be less disparaging; more akin to Mira Murati, OpenAI’s chief technology officer, who announced with some degree of understatement this month, “We are looking at the future of the interaction between ourselves and machines.”

Tags: allen newell > Bence Olveczky > cognition > cognitive psychology > Descartes > developmental biology > evolutionary biology > GPT-4o > Mira Murati > neuroscience > OpenAI > Tim Bayne

May 17, 2024 Address at Presidents College/U. of Hartford: “Artificial Intelligence (AI) and The Future of American Medicine.

Posted on | May 18, 2024 | Comments Off on May 17, 2024 Address at Presidents College/U. of Hartford: “Artificial Intelligence (AI) and The Future of American Medicine.

Artificial Intelligence (AI) and the Future of American Medicine

Link here: https://www.healthcommentary.org/about/artificial-intelligence-ai-and-the-future-of-medicine/

GPT-4o: “From babble to concordance to inclusivity…”

Posted on | May 14, 2024 | 3 Comments

Mike Magee

If you follow my weekly commentary on HealthCommentary.org or THCB, you may have noticed over the past 6 months that I appear to be obsessed with mAI, or Artificial Intelligence intrusion into the health sector space.

So today, let me share a secret. My deep dive has been part of a long preparation for a lecture (“AI Meets Medicine”) I will deliver this Friday, May 17, at 2:30 PM in Hartford, CT. If you are in the area, it is open to the public. You can register to attend HERE.

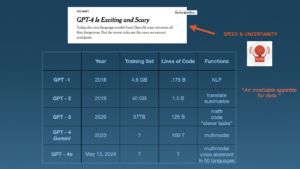

The image above is a portion of one of 80 slides I will cover over the 90 minute presentation on a topic that is massive, revolutionary, transformational and complex. It is also a moving target, as illustrated in the final row above which I added this morning.

The addition was forced by Mira Murati, OpenAI’s chief technology officer, who announced from a perch in San Francisco yesterday that, “We are looking at the future of the interaction between ourselves and machines.”

The new application, designed for both computers and smart phones, is GPT-4o. Unlike prior members of the GPT family, which distinguished themselves by their self-learning generative capabilities and an insatiable thirst for data, this new application is not so much focused on the search space, but instead creates a “personal assistant” that is speedy and conversant in text, audio and image (“multimodal”).

OpenAI says this is “a step towards much more natural human-computer interaction,” and is capable of responding to your inquiry “with an average 320 millisecond (delay) which is similar to a human response time.” And they are fast to reinforce that this is just the beginning, stating on their website this morning “With GPT-4o, we trained a single new model end-to-end across text, vision, and audio, meaning that all inputs and outputs are processed by the same neural network. Because GPT-4o is our first model combining all of these modalities, we are still just scratching the surface of exploring what the model can do and its limitations.”

It is useful to remind that this whole AI movement, in Medicine and every other sector is about language. And as experts in language remind us, “Language and speech in the academic world are complex fields that go beyond paleoanthropology and primatology,” requiring a working knowledge of “Phonetics, Anatomy, Acoustics and Human Development, Syntax, Lexicon, Gesture, Phonological Representations, Syllabic Organization, Speech Perception, and Neuromuscular Control.”

The notion of instantaneous, multimodal communication with machines has seemingly come of nowhere but is actually the product of nearly a century of imaginative, creative and disciplined discovery by information technologists and human speech experts, who have only recently fully converged with each other. As paleolithic archeologist, Paul Pettit, PhD, puts it, “There is now a great deal of support for the notion that symbolic creativity was part of our cognitive repertoire as we began dispersing from Africa.” That is to say, “Your multimodal computer imagery is part of a conversation begun a long time ago in ancient rock drawings.”

Throughout history, language has been a species accelerant, a secret power that has allowed us to dominate and rise quickly (for better or worse) to the position of “masters of the universe.” The shorthand: We humans have moved “From babble to concordance to inclusivity…”

GPT-4o is just the latest advance, but is notable not because it emphasizes the capacity for “self-learning” which the New York Times correctly bannered as “Exciting and Scary,” but because it is focused on speed and efficiency in the effort to now compete on even playing field with human to human language. As OpenAI states, “GPT-4o is 2x faster, half the price, and has 5x higher (traffic) rate limits compared to GPT-4.”

Practicality and usability are the words I’d chose. In the companies words, “Today, GPT-4o is much better than any existing model at understanding and discussing the images you share. For example, you can now take a picture of a menu in a different language and talk to GPT-4o to translate it, learn about the food’s history and significance, and get recommendations.”

In my lecture, I will cover a great deal of ground, as I attempt to provide historic context, relevant nomenclature and definitions of new terms, and the great potential (both good and bad) for applications in health care. As many others have said, “It’s complicated!”

But as this week’s announcement in San Francisco makes clear, the human-machine interface has blurred significantly. Or as Mira Murati put it, “You want to have the experience we’re having — where we can have this very natural dialogue.”

Tags: artificial intelligence > CPT-4o > health care > language > mAI > Mira Murati > multimodal > OpenAI

Justice Amy Coney Barrett Has a Mortal Sin On Her Conscience.

Posted on | May 8, 2024 | 2 Comments

Mike Magee

Justice Amy Coney Barrett, Catholic and conservative, likely found this week’s Health Affairs article on violence toward women in Red states where abortion was banned uncomfortable reading. Its authors state that the “rapidly increasing passage of state legislation has restricted or banned access to abortion care” and has triggered an increase in “intimate partner violence” in girls 10 to 44. Authors were able to document that each targeted action to restrict access to abortion providers delivered a 3.4% rise in violence against these pregnant girls and women.

It is not as if the Supreme Court judges weren’t warned that their ruling would immediately trigger reactivation of century old laws designed to reinforce second class citizenship of women. Nor were they unaware that red state captive legislatures would likely go “fast and furious” in pursuit of fetal “personhood” regardless of the potential loss of life and limb to the mothers. And yet, faced with radical evangelical leaders directed attacks on IVF, even conservative justices (excepting Clarence Thomas) seemed genuinely surprised.

For experts in maternal-fetal health, the findings come as no surprise. A landmark study in the BMC Medicine in 2014 found that up to 22% of women seeking abortion were the victims of intimate partner violence. The study concluded that “Policies restricting abortion provision may result in more women being unable to terminate unwanted pregnancies, potentially keeping them in contact with violent partners, and putting women and their children at risk.”

It is not as if “Intimate Partner Violence” is uncommon in the U.S. A New England Journal of Medicine article, published four months after the Dobbs decision was handed down, documented that “Overall, one in three women in the United States experiences contact sexual violence, physical violence, or stalking by an intimate partner (or a combination of these) at some point, with higher rates among women in historically marginalized racial or ethnic groups.”

That data was available on government websites well before the Justices announced the Dobbs decision on June 24, 2022. Red states already possessed unfavorable demographics when it came to violence against women. Liberal policies toward guns, combined with sub-standard safety nets in this states, have created a toxic health environment for young women. As data supports, “Most vulnerable in this new legal landscape will be people who have limited access to resources and services and inadequate protection against violence, especially those living in overburdened communities — primarily young, low-income women from historically marginalized racial or ethnic groups…The majority of Intimate Partner Violence related homicides involve firearms.”

One must wonder whether Justice Amy Coney Barrett, the only conservative woman on the Court, second guessed herself when she read the November article predictions of the fallout of Dobbs. It read, “Legal restrictions on reproductive health care and access to abortion will leave people more vulnerable to control by their abusers. Policies permitting easier access to firearms, including the ability to carry guns in public, will further jeopardize survivors’ safety.”

With Justice Barrett’s support, the Supreme Court had voted on two successive days in favor of less restricted access to guns, and more restricted access to abortion. In New York State Rifle & Pistol Association v. Bruen, decided one day before the Dobbs case, Barrett and conservatives struck down New York’s requirement that its citizens declare a “special need for self-defense” to carry a handgun outside the home. Justice Stephen Breyer, in his dissent the following day, made clear the carnage that lay ahead. He cited the fact that “women are five times as likely to be killed by an intimate partner if the partner has access to a gun.”

If Justice Barrett is unable to somehow make this right in the future, she will be left (as we Catholics like to say) to carry this sin on her conscience. And to be clear, for the women of America, this sin is “mortal” not “venial.” Stipulations for a “mortal sin”, according to the Catholic religion are:

1. “Serious or grave matter. This often means that the action is clearly evil or severely disordered.”

2. “Sufficient knowledge or reflection. This means we know we are committing a sinful act and that we have had sufficient reflection for it to be intentional.”

3. “Full consent of the will. This means that we must freely choose to commit a grave sin in order for it to be a mortal sin.”

Tags: amy coney barrett > banned abortions > BMC Medicine > bruen case > catholicism > conservatives > Dobbs > health affairs > mortal sin > supreme court > venial sin > womens health care

Will AI Revolutionize Surgical Care? Yes, But Maybe Not How You Think.

Posted on | May 2, 2024 | 2 Comments

Mike Magee

If you talk to consultants about AI in Medicine, it’s full speed ahead. GenAI assistants, “upskilling” the work force, reshaping customer service, new roles supported by reallocation of budgets, and always with one eye on “the dark side.”

But one area that has been relatively silent is surgery. What’s happening there? In June, 2023, the American College of Surgeons (ACS) weighed in with a report that largely stated the obvious. They wrote, “The daily barrage of news stories about artificial intelligence (AI) shows that this disruptive technology is here to stay and on the verge of revolutionizing surgical care.”

Their summary self-analysis was cautious, stating: “By highlighting tools, monitoring operations, and sending alerts, AI-based surgical systems can map out an approach to each patient’s surgical needs and guide and streamline surgical procedures. AI is particularly effective in laparoscopic and robotic surgery, where a video screen can display information or guidance from AI during the operation.”

It is increasingly obvious that the ACS is not anticipating an invasion of robots. In many ways, this is understandable. The operating theater does not reward hyperbole or flashy performances. In an environment where risk is palpable, and simple tremors at the wrong time, and in the wrong place, can be deadly, surgical players are well-rehearsed and trained to remain calm, conservative, and alert members of the “surgical team.”

Johnson & Johnson’s AI surgery arm, MedTech, brands surgeons as “high-performance athletes” who are continuous trainers and learners…but also time-constrained “busy surgeons.” The heads of their AI business unit say that they want “to make healthcare smarter, less invasive, more personalized and more connected.” As a business unit, they decided to focus heavily of surgical education. “By combining a wealth of data stemming from surgical procedures and increasingly sophisticated AI technologies, we can transform the experience of patients, doctors and hospitals alike. . . When we use AI, it is always with a purpose.”

The surgical suite is no stranger to technology. Over the past few decades, lasers, laparoscopic equipment, microscopes, embedded imaging, all manner of alarms and alerts, and stretcher-side robotic work stations have become commonplace. It’s not like mAI is ACS’s first tech rodeo.

Mass General surgeon, Jennifer Eckhoff, MD, sees the movement in broad strokes. “Not surprisingly, the technology’s biggest impact has been in the diagnostic specialties, such as radiology, pathology, and dermatology.” University of Kentucky surgeon, Danielle Walsh MD also chose to look at other departments. “AI is not intended to replace radiologists. – it is there to help them find a needle in a haystack.” But make no mistake, surgeons are aware that change is on the way. University of Minnesota surgeon, Christopher Tignanelli, MD’s, view is that the future is now. He says, “AI will analyze surgeries as they’re being done and potentially provide decision support to surgeons as they’re operating.”

AI robotics as a challenger to their surgical roles, most believe, is pure science fiction. But as a companion and team member, most see the role of AI increasing, and increasing rapidly in the O.R. The greater the complexity, the more the need. As Mass General’s Eckoff says, “Simultaneously processing vast amounts of multimodal data, particularly imaging data, and incorporating diverse surgical expertise will be the number one benefit that AI brings to medicine. . . Based on its review of millions of surgical videos, AI has the ability to anticipate the next 15 to 30 seconds of an operation and provide additional oversight during the surgery.”

As the powerful profit center for most hospitals, dollars are likely to keep up with visioning as long as the “dark side of AI” is kept at bay. That includes “guidelines and guardrails” as outlined by new, rapidly forming elite academic AI collaboratives, like the Coalition for Health AI. Quality control, acceptance of liability and personal responsibility, patient confidence and trust, are all prerequisite. But the rewards, in the form of diagnostics, real-time safety feedback, precision and tremor-less technique, speed and efficient execution, and improved outcomes likely will more than make up for the investment in time, training, and dollars.

Tags: ACS > AI > AI in Surgery > Christopher Tignanelli MD > Coalition for Health AI > Danielle Walsh MD > Healthcare Technology > J&J > jennifer Eckhoff MD > MedTech

The AI Enhanced Personal Health Record – The Key That Unlocks The Door To Universal Health Care.

Posted on | April 23, 2024 | Comments Off on The AI Enhanced Personal Health Record – The Key That Unlocks The Door To Universal Health Care.

Mike Magee

In a system that controls 1/5 of the U.S. GDP; one that in 2017 employed 16 non-clinical workers for every physician; and one that under-performs at every turn (most notably for women and children, the poor, and people of color); one would be hard pressed to identify a better target for AI-driven national reform.

Pessimists say we’ve been down this way before and that the various arms of the Medical Industrial Complex will place enough road blocks in the way to slow down this transforming steam roller.

But optimists suggest that this time is different, and that the entry of generative Artificial Intelligence (or “Augmented Intelligence” – the AMA’s preferred term for AI) is, in fact, a real game changer – and that your Personal Health Record is the key that unlocks the door.

Surveys show that 8 in 10 health care execs already use generative AI in some form, and that 2/3 of physicians see advantages for them and their patients. From clerical to clinical to discovery, opportunity abounds. A technology that can self-correct its own errors and is easy enough to use that health professionals and the people they care for start on an even playing field sounds like a “safe bet.”

But seasoned health reformers increasingly point to a third factor – the infrastructure already in place with Electronic Health Records (EHRs), and the knowledge and connectivity we’ve built as we’ve overcome obstacles over the past three decades. Consider, they say, where we have been, and how far we have come.

In my father’s day, and throughout much of my own training, paper “patient charts” ruled the day. As I began my surgical training in 1973, the value of electronic health records (EHRs) was still largely theoretical, and its usefulness was largely defined as the capacity to finally ensure that physician hand writing was legible.

In the first two decades of experimentation with various hybrid forms of EHRs the focus was on hospital billing and scheduling systems supported by large mainframe computers with wired terminals and limited storage. The notion of physician entry was seen as largely impractical both on behavioral and financial grounds. By 1990, early medical IT dreamers were imagining a conversion as personal computing emerged (“affordable, powerful, and compact”) fed by data flowing over the Internet.

In 1992, the effort received a giant boost from the Institute of Medicine which formally recommended a conversion over time from a paper-based to and electronic data system. While the sparks of that dream flickered, fanned by “true-believers who gathered for the launch of the International Medical Informatics Association (IMIA), hospital administrators dampened the flames siting conversion costs, unruly physicians, demands for customization, liability, and fears of generalized workplace disruption.

True believers and tinkerers chipped away on a local level. The personal computer, increasing Internet speed, local area networks, and niceties like an electronic “mouse” to negotiate new drop-down menus, alert buttons, pop-up lists, and scrolling from one list to another, slowly began to convert physicians and nurses who were not “fixed” in their opposition.

On the administrative side, obvious advantages in claims processing and document capture fueled investment behind closed doors. And entrepreneurs were already predicting that “data would be king” in the future. If you could eliminate filing and retrieval of charts, photocopying, and delays in care, there had to be savings to fuel future investments.

What if physicians had a “workstation,” movement leaders asked in 1992? While many resisted, most physicians couldn’t deny that the data load (results, orders, consults, daily notes, vital signs, article searches) was only going to increase. Shouldn’t we at least begin to explore better ways of managing data flow. Might it even be possible in the future to access a patient’s hospital data in your own private office and post an order without getting a busy floor nurse on the phone?

By the early 1990s, individual specialty locations in the hospital didn’t wait for general consensus. Administrative computing began to give ground to clinical experimentation using off the shelf and hybrid systems in infection control, radiology, pathology, pharmacy, and laboratory. The movement then began to consider more dynamic nursing unit systems.

By now, hospitals legal teams were engaged. State laws required that physicians and nurses be held accountable for the accuracy of their chart entries through signature authentication. Electronic signatures began to appear, and this was occurring before regulatory and accrediting agencies had OK’d the practice.

By now medical and public health researchers realized that electronic access to medical records could be extremely useful, but only if the data entry was accurate and timely. Already misinformation was becoming a problem. Whether for research or clinical decision making, partial accuracy was clearly not good enough. Add to this a sudden explosion of offerings of clinical decision support tools which began to appear, initially focused on prescribing safety featuring flags for drug-drug interactions, and drug allergies. Interpretation of lab specimens and flags for abnormal lab results quickly followed.

As local experiments expanded, the need for standardization became obvious to commercial suppliers of EHRs. In 1992, suppliers and purchasers embraced Health Level Seven (HL7) as “the most practical solution to aggregate ancillary systems like laboratory, microbiology, electrocardiogram, echocardiography, and other results.” At the same time, the National Library of Medicine engaged in the development of a Universal Medical Language System (UMLS).

As health care organizations struggled along with financing and implementation of EHRs, issues of data ownership, privacy, informed consent, general liability, and security began to crop up. Uneven progress also shed a light on inequities in access and coverage, as well as racially discriminatory algorithms.

In 1996, the government instituted HIPPA, the Health Information Portability and Accountability Act, which focused protections on your “personally identifiable information” and required health organizations to insure its safety and privacy.

All of these programmatic challenges, as well as continued resistance by physicians jealously guarding “professional privilege, meant that by 2004, only 13% of health care institutions had a fully functioning EHR, and roughly 10% were still wholly dependent on paper records. As laggards struggled to catch-up, mental and behavioral records were incorporated in 2008.

A year later, the federal government weighed in with the 2009 Health Information Technology for Economic and Clinical Health Act (HITECH). It incentivized organizations to invest in and document “EHRs that support ‘meaningful use’ of EHRs”. Importantly, it also included a “stick’ – failure to comply reduced an institution’s rate of Medicare reimbursement.

By 2016, EHRs were rapidly becoming ubiquitous in most communities, not only in hospitals, but also in insurance companies, pharmacies, outpatient offices, long-term care facilities and diagnostic and treatment centers. Order sets, decision trees, direct access to online research data, barcode tracing, voice recognition and more steadily ate away at weaknesses, and justified investment in further refinements.

The health consumer, in the meantime, was rapidly catching up. By 2014, Personal Health Records, was a familiar term. A decade later, they are a common offering in most integrated health care systems.

All of which brings us back to generative AI, and New multimodal AI entrants, like ChatGPT-4 and Genesis. They will not be starting from scratch, but are building on all the hard fought successes above.

Multimodal, large language, self learning mAI is limited by only one thing – data. And we are literally the source of that data. Access to us – each of us and all of us – is what is missing.

What would you, as one of the 333 million U.S. citizens in the U.S., expect to offer in return for universal health insurance and reliable access to high quality basic health care services?

Would you be willing to provide full and complete de-identified access to all of your vital signs, lab results, diagnoses, external and internal images, treatment schedules, follow-up exams, clinical notes, and genomics? An answer of “yes” could easily trigger the creation of universal health coverage and access in America.

Two key questions remain:

- How will mAI keep up? Answer: Generative AI is self-correcting and self-improving based on data input. Strong regulatory oversight will be essential. But with these protections in place, health coverage in the future will likely require you to provide all your de-identified data in return for access to care and coverage. You are now your data.

- How will all that data be stored? Answer: New chips, like those provided by Nvidia modeled after gamer chips originated by Atari, better able to manage the load, but at what cost? Dollars for sure, but also extraordinary consumption of energy and water for cooling.

Tags: CDS > ChatGPT > clinical decision support > e signatures > EHR > genAI > Genesis > health reform > HIPPA > HITECH > HL7 > mAI > patient chart > PHR > physician workstation